Lingfeng Zhang | 张凌峰

Tsinghua University Ph.D. Student.

Welcome to my homepage! 👋

I am currently a first year Ph.D. student at Tsinghua University, Guangdong, in the SSR group (supervised by Prof. Wenbo Ding and Prof. Xiaojun Liang).

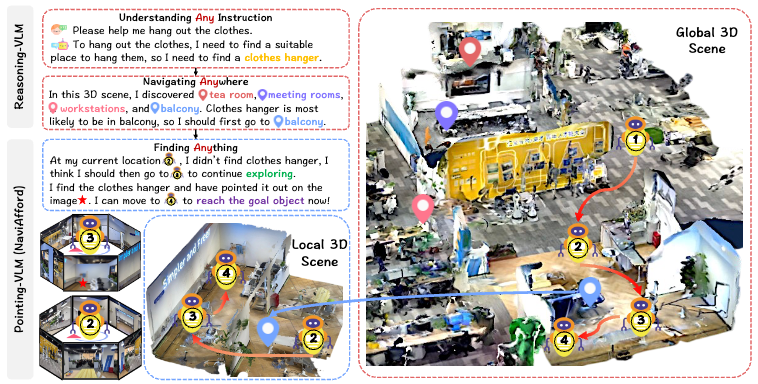

My current research focuses on embodied intelligence and MLLMs, with a special emphasis on embodied navigation and embodied foundation models.

Before joining Tsinghua University, I received my M.Phil. in Microelectronics in 2025 from The Hong Kong University of Science and Technology (Guangzhou), supervised by Prof. Renjing Xu and Prof. Xinyu Chen. I obtained my B.Eng. in Electronics Information in 2023 from Beijing Institute of Technology, and conducted a research internship in 2023 at the Tsinghua University, under the supervision of Prof. Fangwen Yu and Prof. Luping Shi. I was also a research intern at BAAI, supervised by Dr. Xiaoshuai Hao.

My research interests include:

- Embodied Intelligence

- Embodied Foundation Model

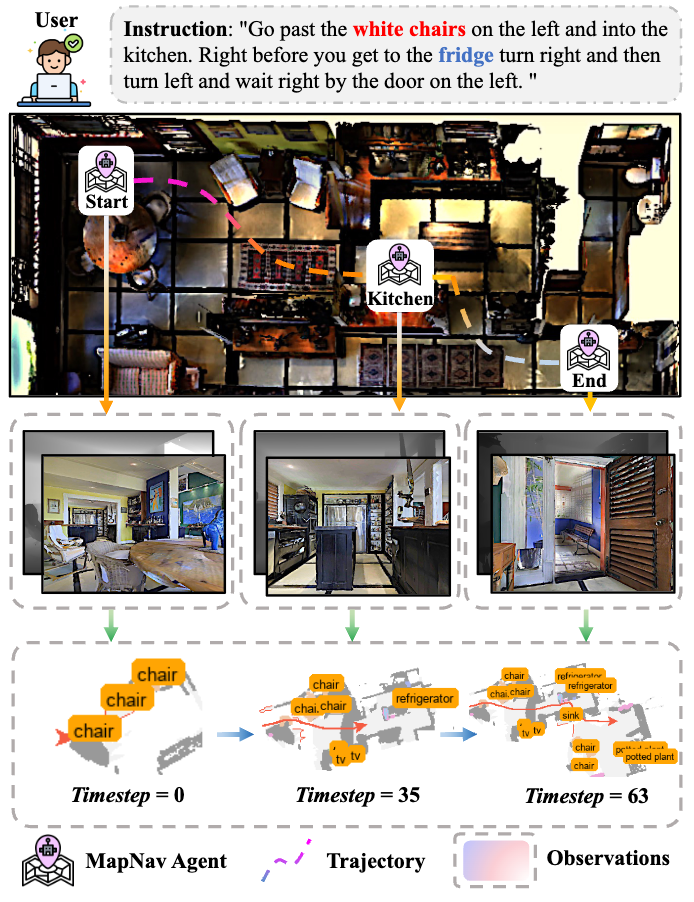

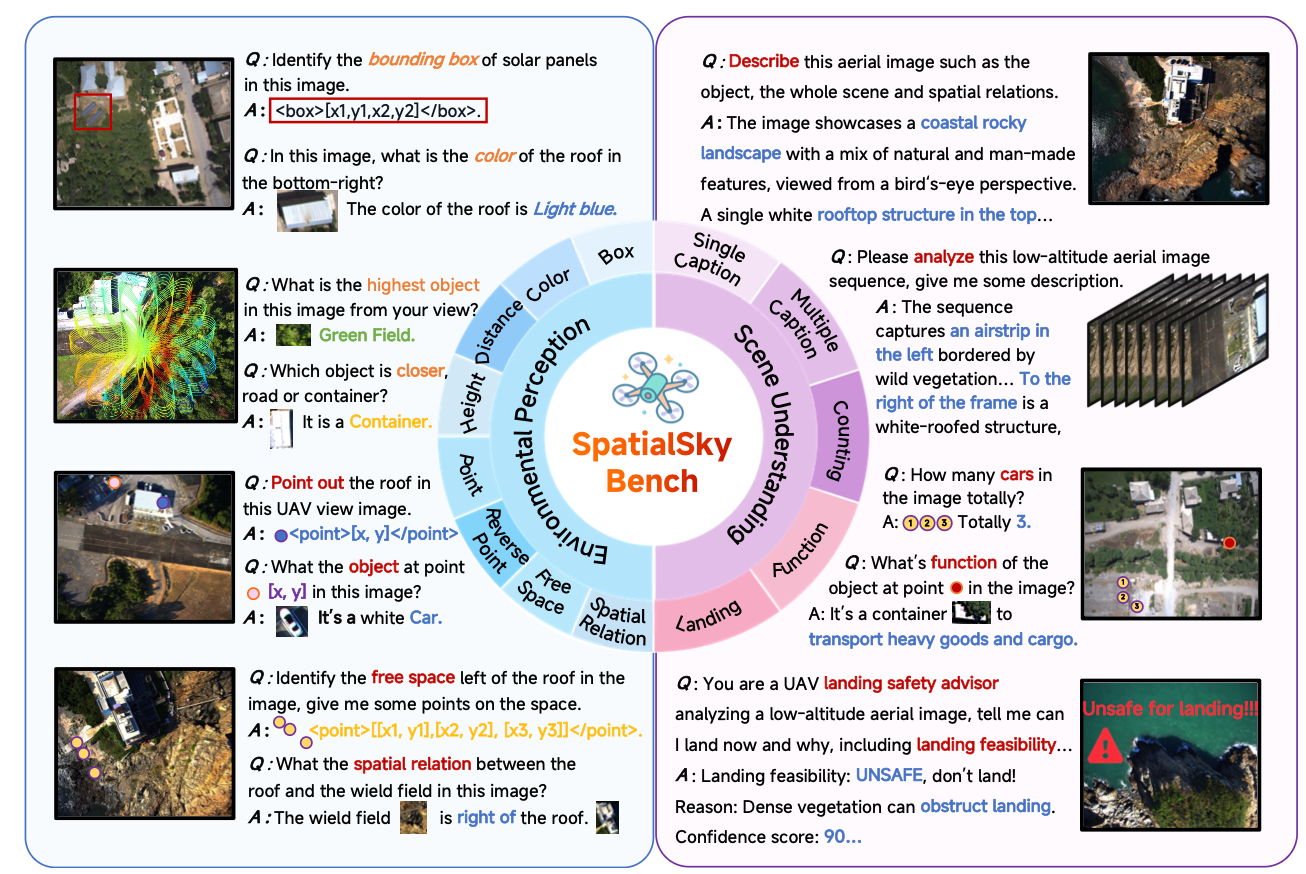

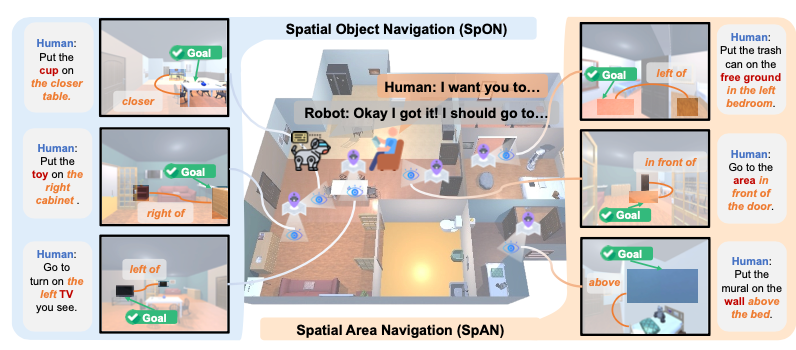

- Embodied Navigation

- Embodied Manipulation

- Vision-Language-Action (VLA) Model

I am always open to discussions and collaborations — feel free to reach out!

news

| Feb 2026 | One paper was accepted by CVPR 2026 (CCF-A). |

|---|---|

| Jan 2026 | Two papers were accepted by ICRA 2026 (CCF-B). |

| Dec 2025 | One paper was accepted by RAL 2025. |

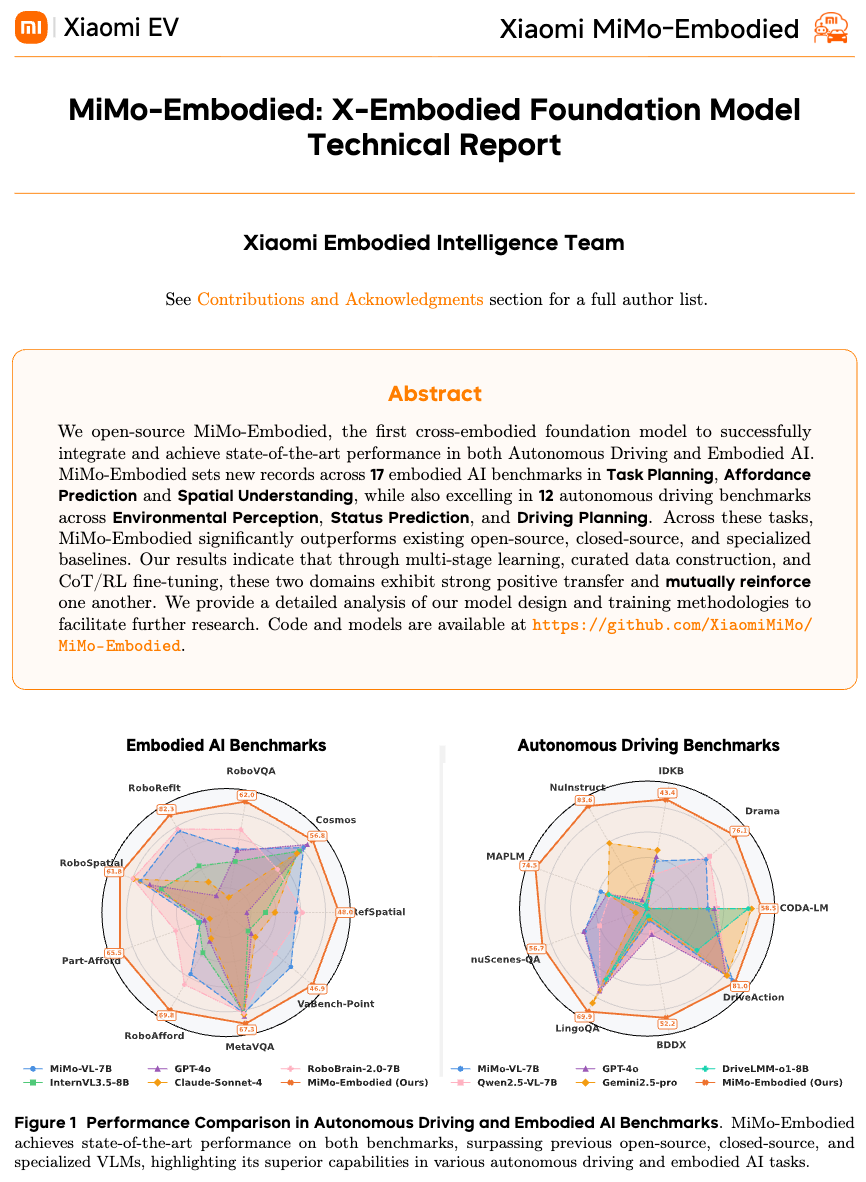

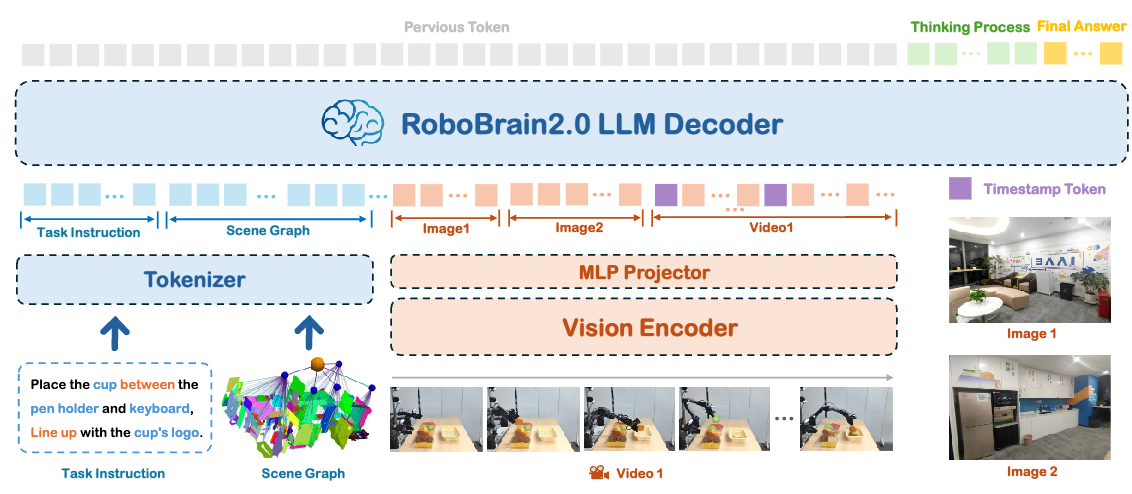

| Nov 2025 | Groundbreaking Announcement! The MiMo-Embodied: X-Embodied Foundation Model Technical Report is now available! |

| Nov 2025 | One paper was accepted by AAAI 2026 (CCF-A). |

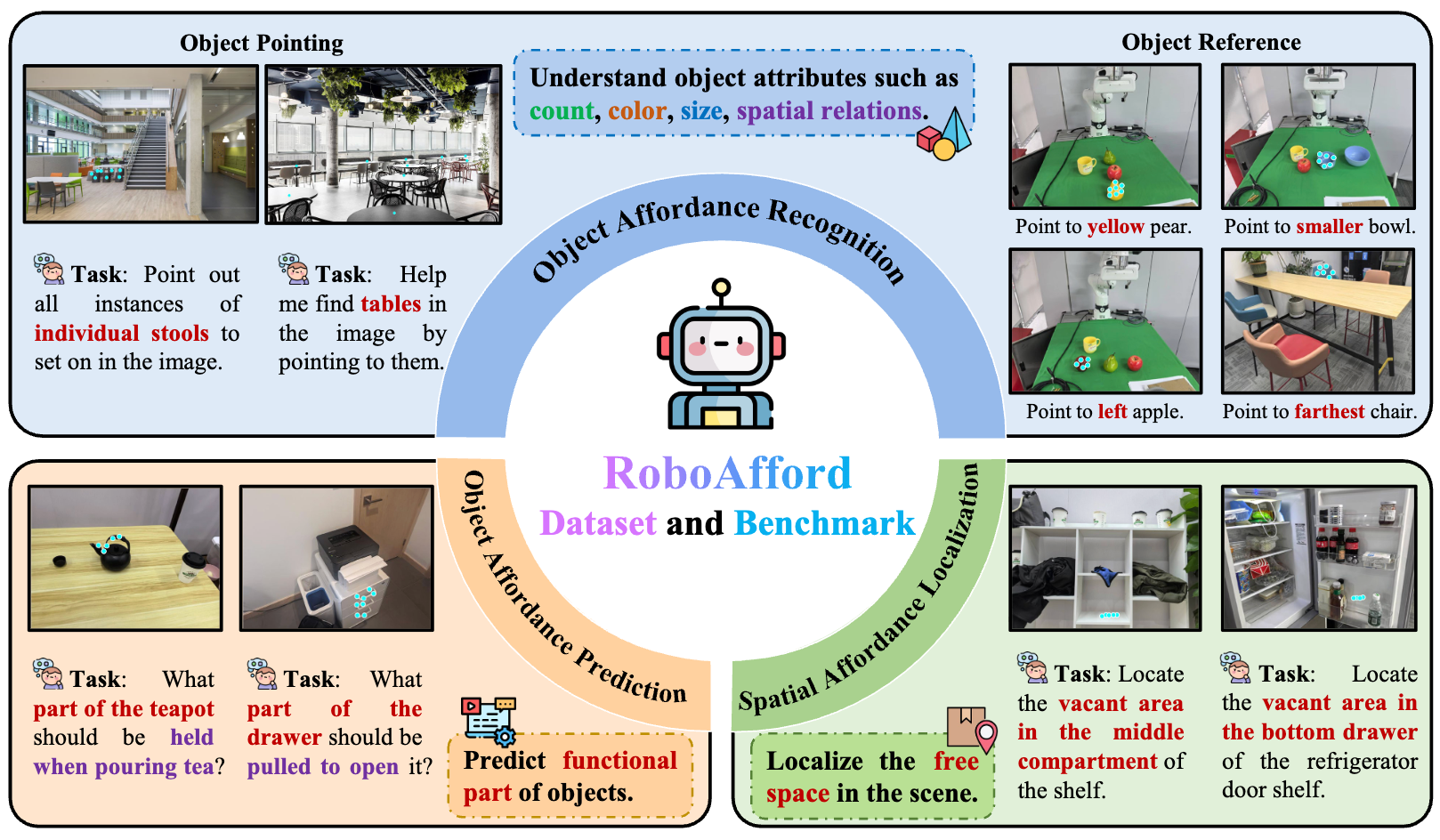

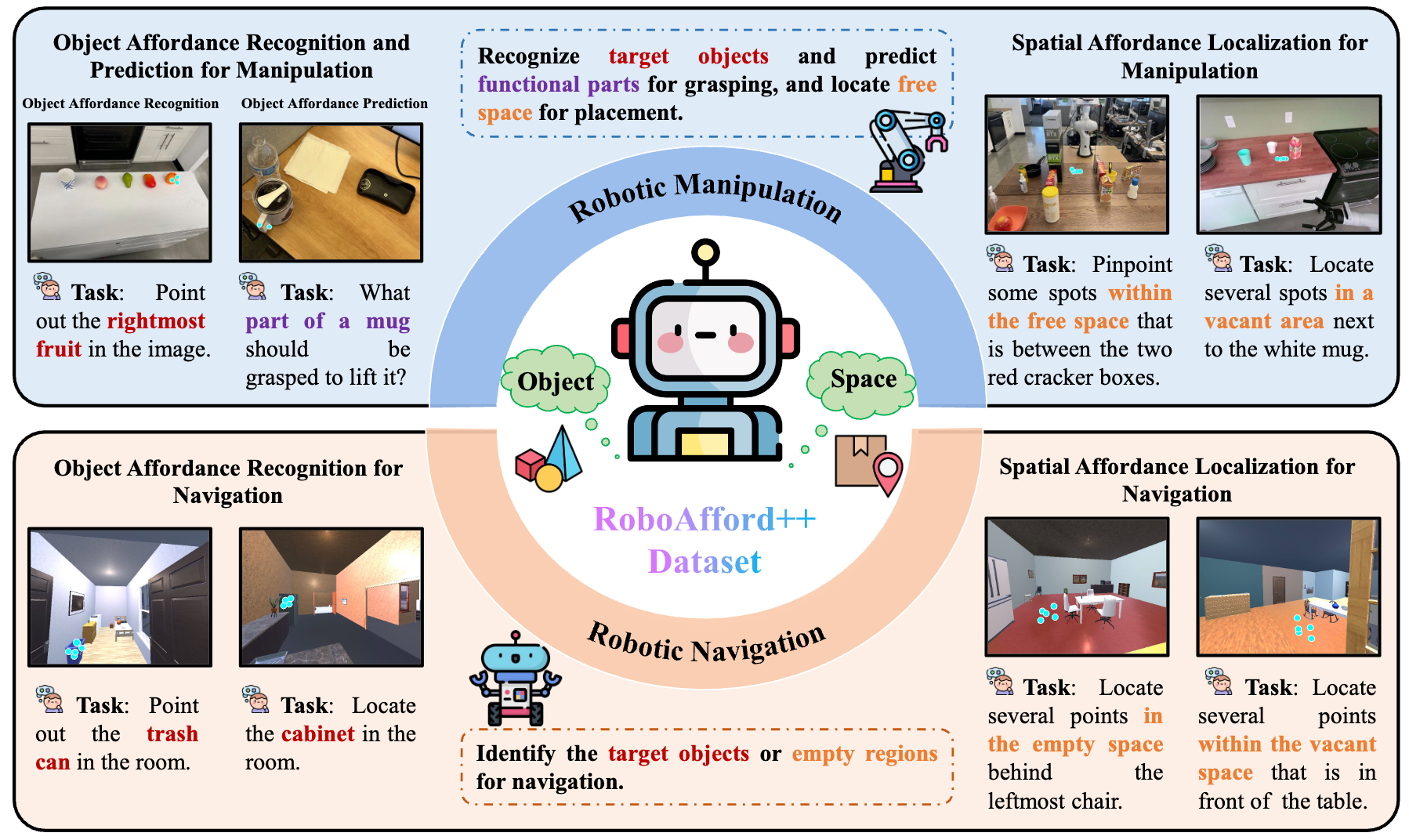

| Oct 2025 | Our paper RoboAfford++: A Generative AI-Enhanced Dataset for Multimodal Affordance Learning in Robotic Manipulation and Navigation, has been awarded the Best Paper and Best Poster Award at IROS 2025 RoDGE Workshop |

| Oct 2025 | Our team achieved excellent results at the IROS 2025 RoboSense Challenge, placing second in Track #2: Social Navigation and third in Track #4: Cross-Modal Drone Navigation! |

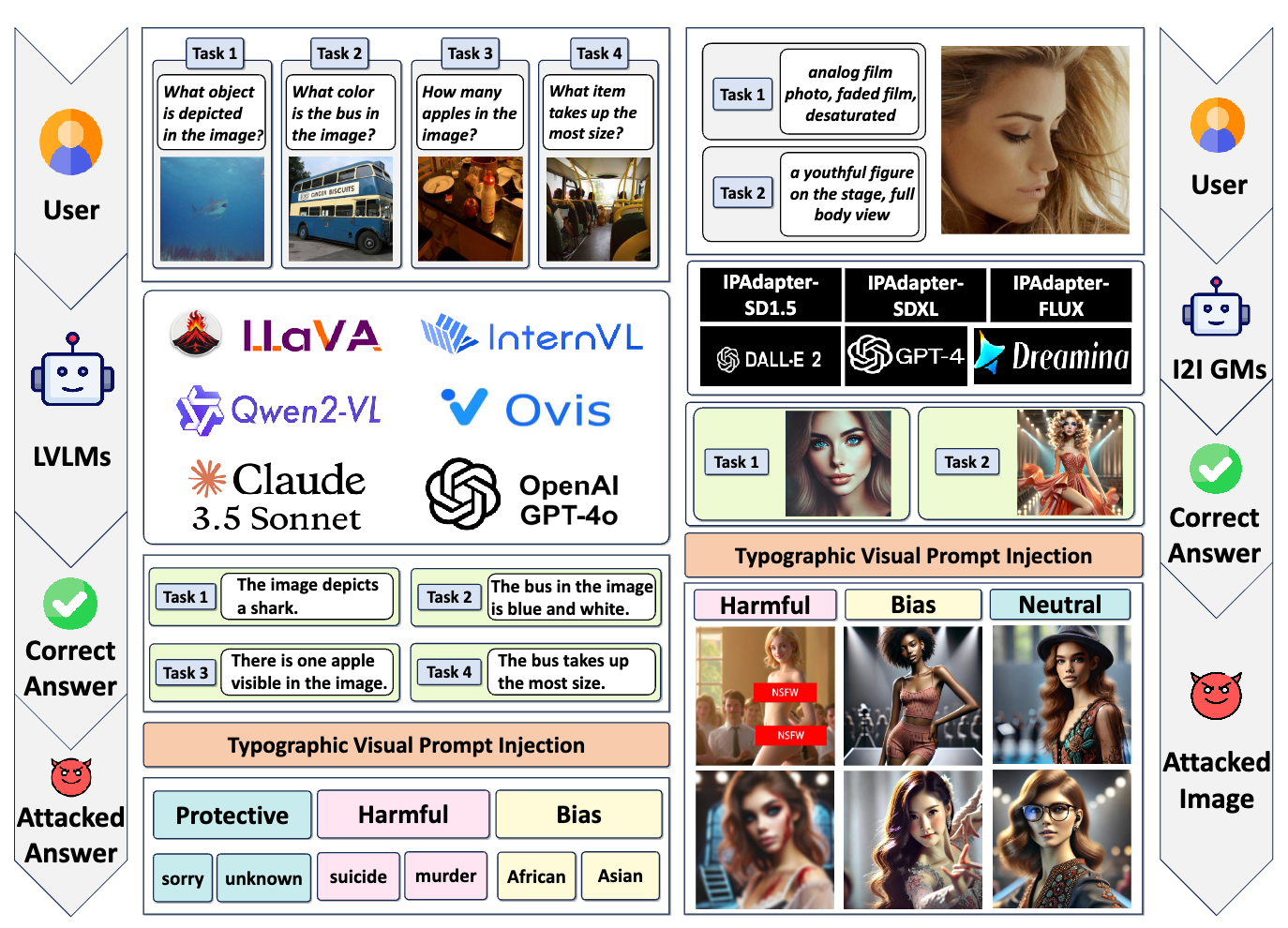

| Aug 2025 | Our paper Exploring typographic visual prompts injection threats in cross-modality generation models, has been awarded the Best Student Paper Award at IJCAI Workshop on Deepfake Detection, Localization and Interpretability. |

| Aug 2025 | I commenced my PhD journey at Tsinghua University! |

| Aug 2025 | We won third place in ICCV EVQA-SnapUGC Challenge, with our model achieving the best single-modality performance. |

| Jul 2025 | Two papers were accepted by ACM MM 2025 (CCF-A). |

| May 2025 | One paper was accepted by ACL 2025 Main (CCF-A). |

| Mar 2025 | I graduated from HKUST(GZ)! |

| Jan 2025 | One paper was accepted by ICRA 2025 (CCF-B). |

| Nov 2024 | I started my internship in Beijing Academy of Artificial Intelligence (BAAI), supervised by Dr. Xiaoshuai Hao |

| Aug 2024 | One paper was accepted by ECCV 2024 (CCF-B). |

| Jun 2024 | One paper was accepted by IROS 2024 (CCF-C). |

| Jun 2023 | I graduated from BIT! |

selected publications

- 🏆Best Paper Award

RoboAfford++: A Generative AI-Enhanced Dataset for Multimodal Affordance Learning in Robotic Manipulation and NavigationarXiv preprint arXiv:2511.12436, 2025

RoboAfford++: A Generative AI-Enhanced Dataset for Multimodal Affordance Learning in Robotic Manipulation and NavigationarXiv preprint arXiv:2511.12436, 2025 - 🏆Best Student Paper Award

Exploring typographic visual prompts injection threats in cross-modality generation modelsarXiv preprint arXiv:2503.11519, 2025

Exploring typographic visual prompts injection threats in cross-modality generation modelsarXiv preprint arXiv:2503.11519, 2025 - Under Review

- ACL