🤖 Embodied Intelligence Research Gallery A comprehensive showcase of my research projects in embodied intelligence, including embodied navigation, foundation models, robotic manipulation, and vision-language-action systems.

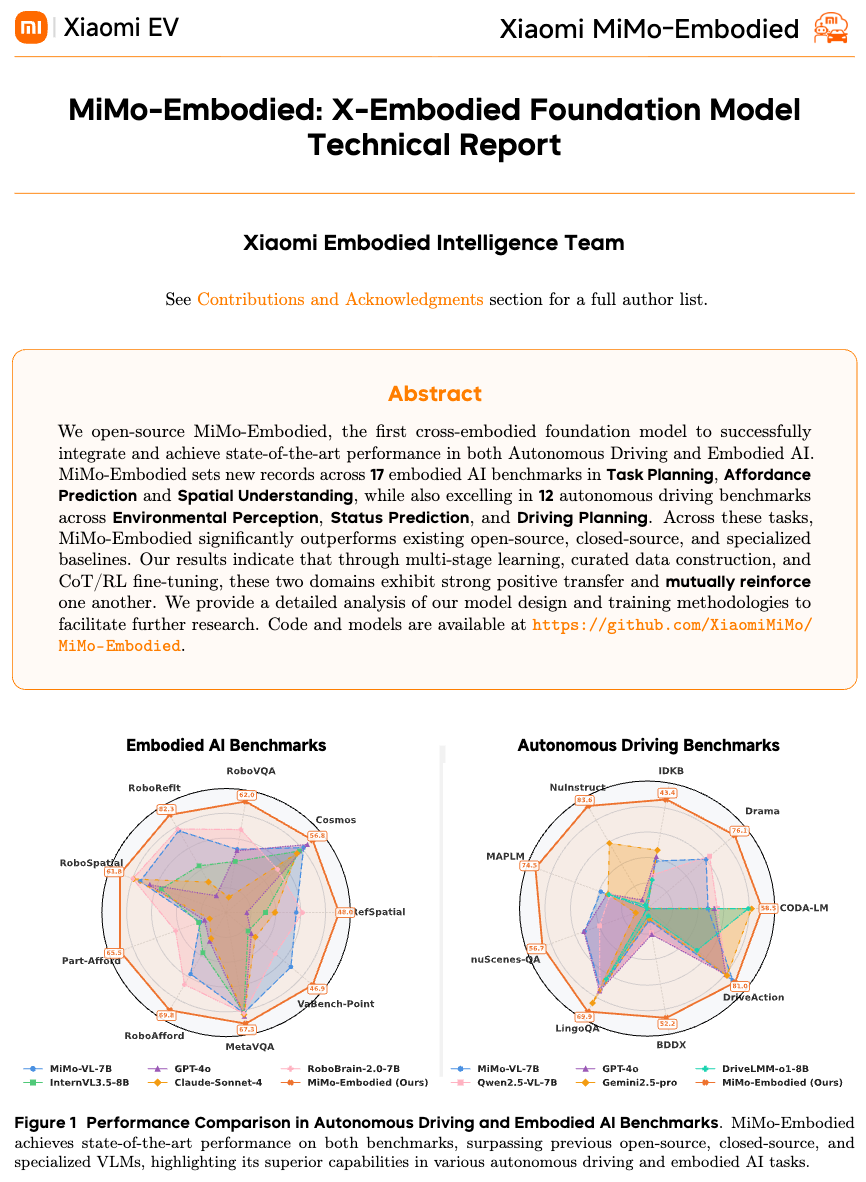

🧠 Embodied Foundation Models MiMo-Embodied Technical Report 2025

X-Embodied Foundation Model for general-purpose embodied intelligence, enabling robots to understand, navigate, and interact with the world.

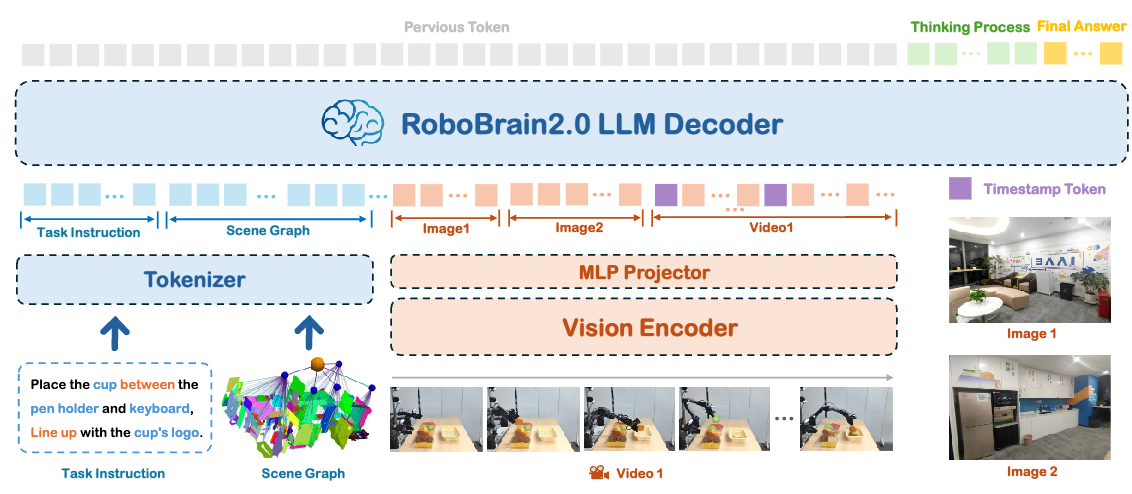

RoboBrain 2.0 Technical Report 2025

Large-scale embodied foundation model developed by BAAI, integrating vision, language, and action for comprehensive robotic intelligence.

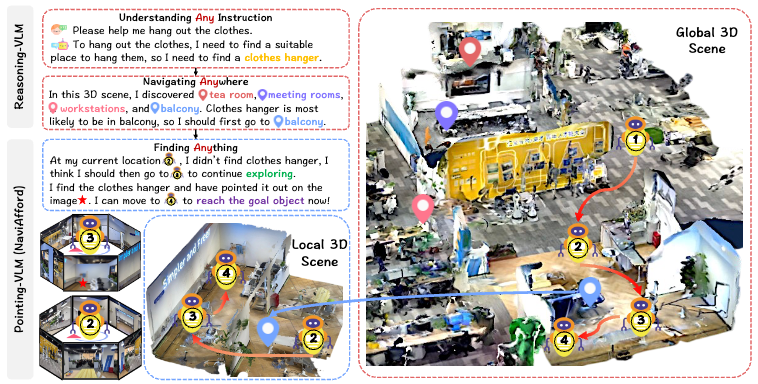

🗺️ Embodied Navigation NavA³ Under Review 2025

Understanding Any Instruction, Navigating Anywhere, Finding Anything. A unified framework for versatile navigation tasks.

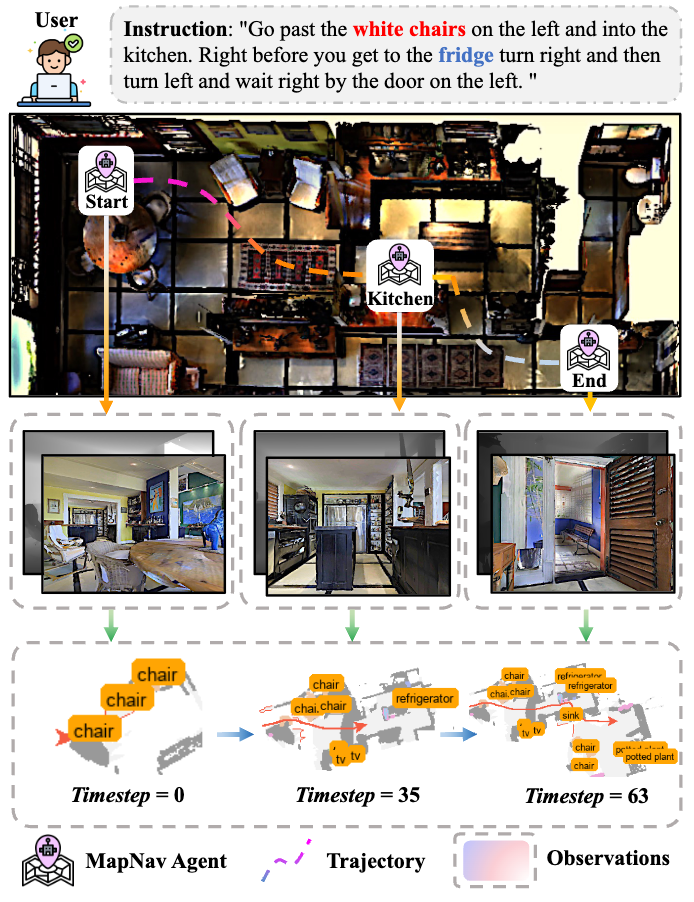

MapNav ACL 2025 2025

A novel memory representation via annotated semantic maps for VLM-based vision-and-language navigation.

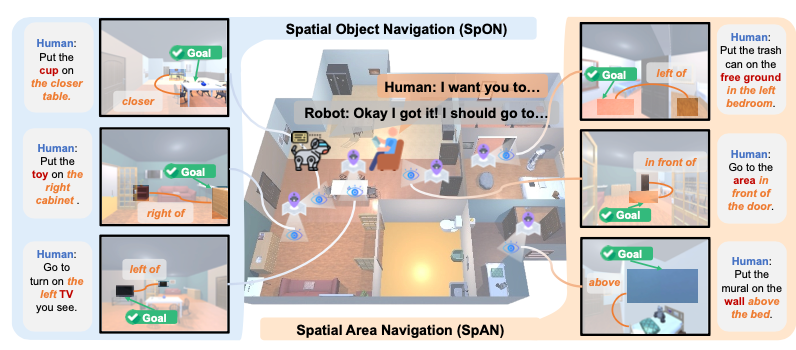

SpatialNav AAAI 2026 2026

What You See is What You Reach: Towards Spatial Navigation with High-Level Human Instructions.

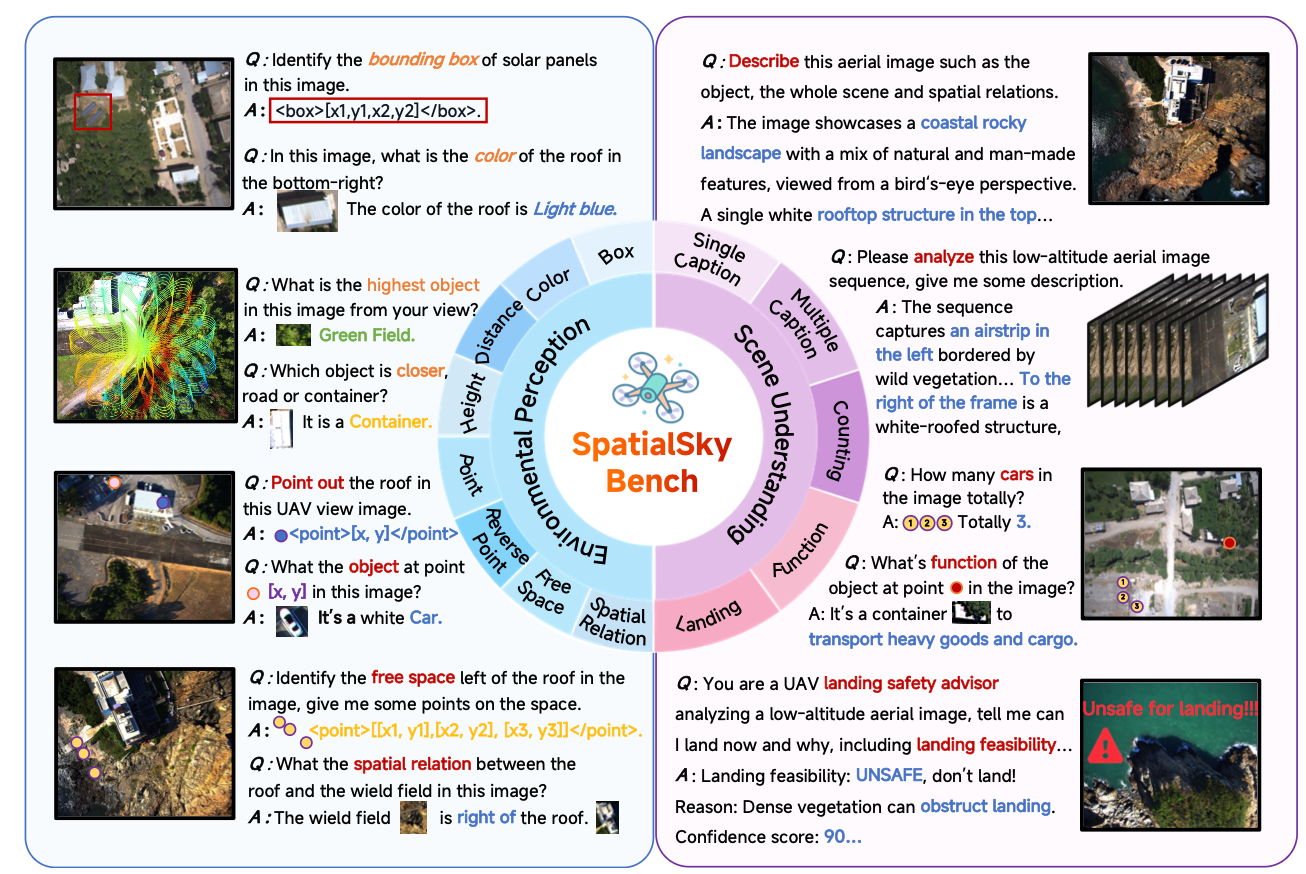

SpatialSky Under Review 2025

Is your VLM Sky-Ready? A Comprehensive Spatial Intelligence Benchmark for UAV Navigation.

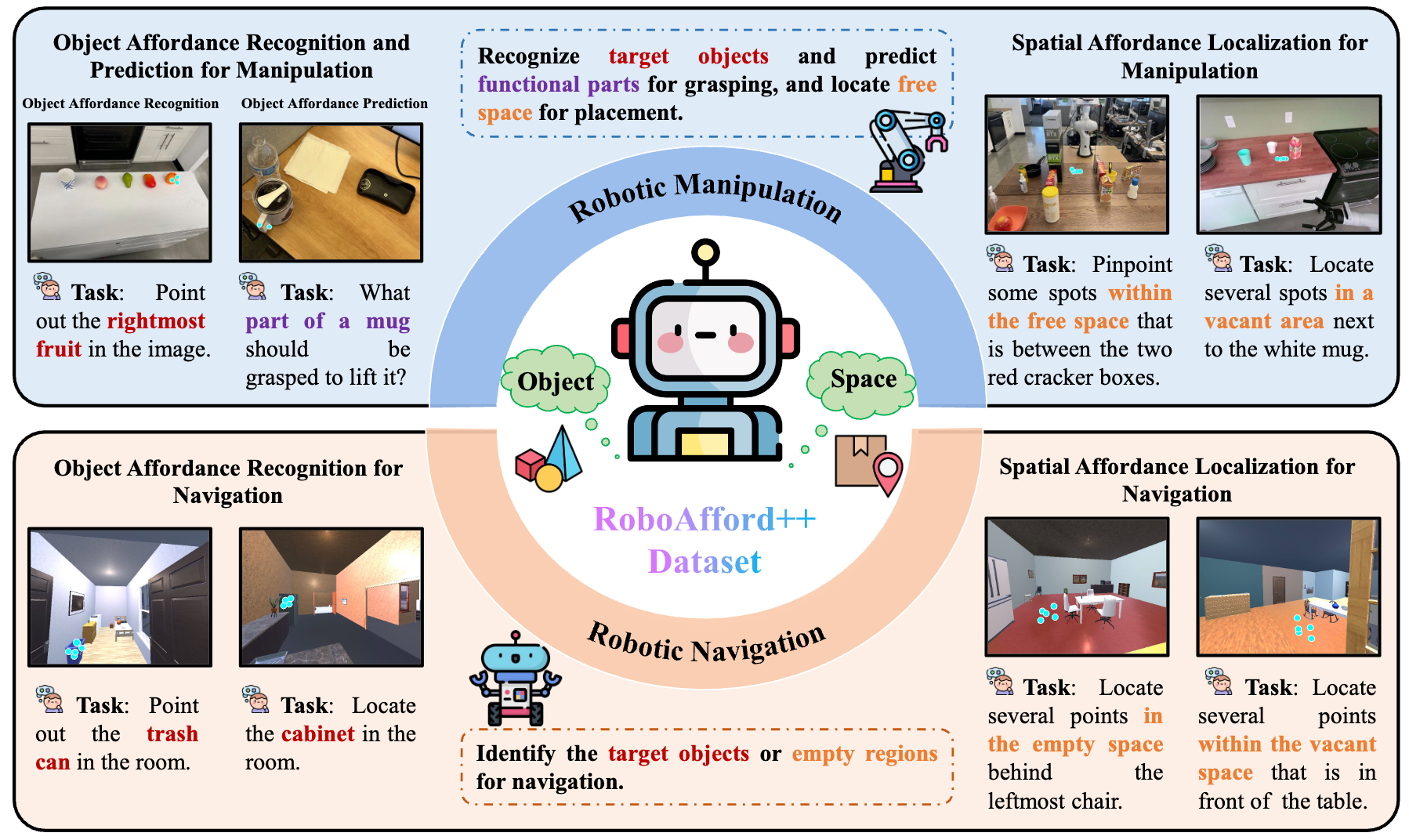

🤲 Robotic Manipulation & Affordance RoboAfford++ 🏆 Best Paper 2025

A Generative AI-Enhanced Dataset for Multimodal Affordance Learning in Robotic Manipulation and Navigation.

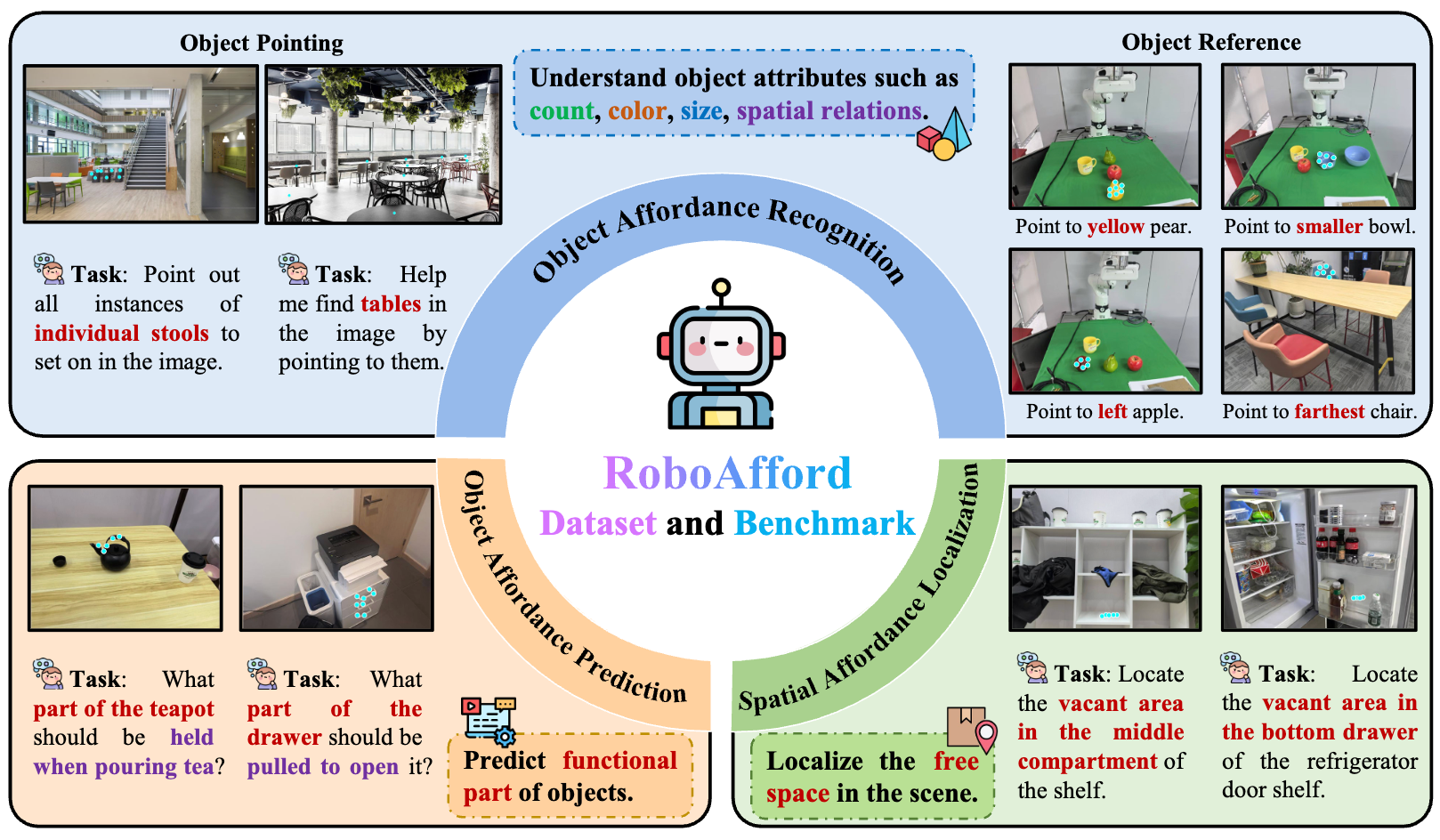

RoboAfford ACM MM 2025 2025

A dataset and benchmark for enhancing object and spatial affordance learning in robot manipulation.

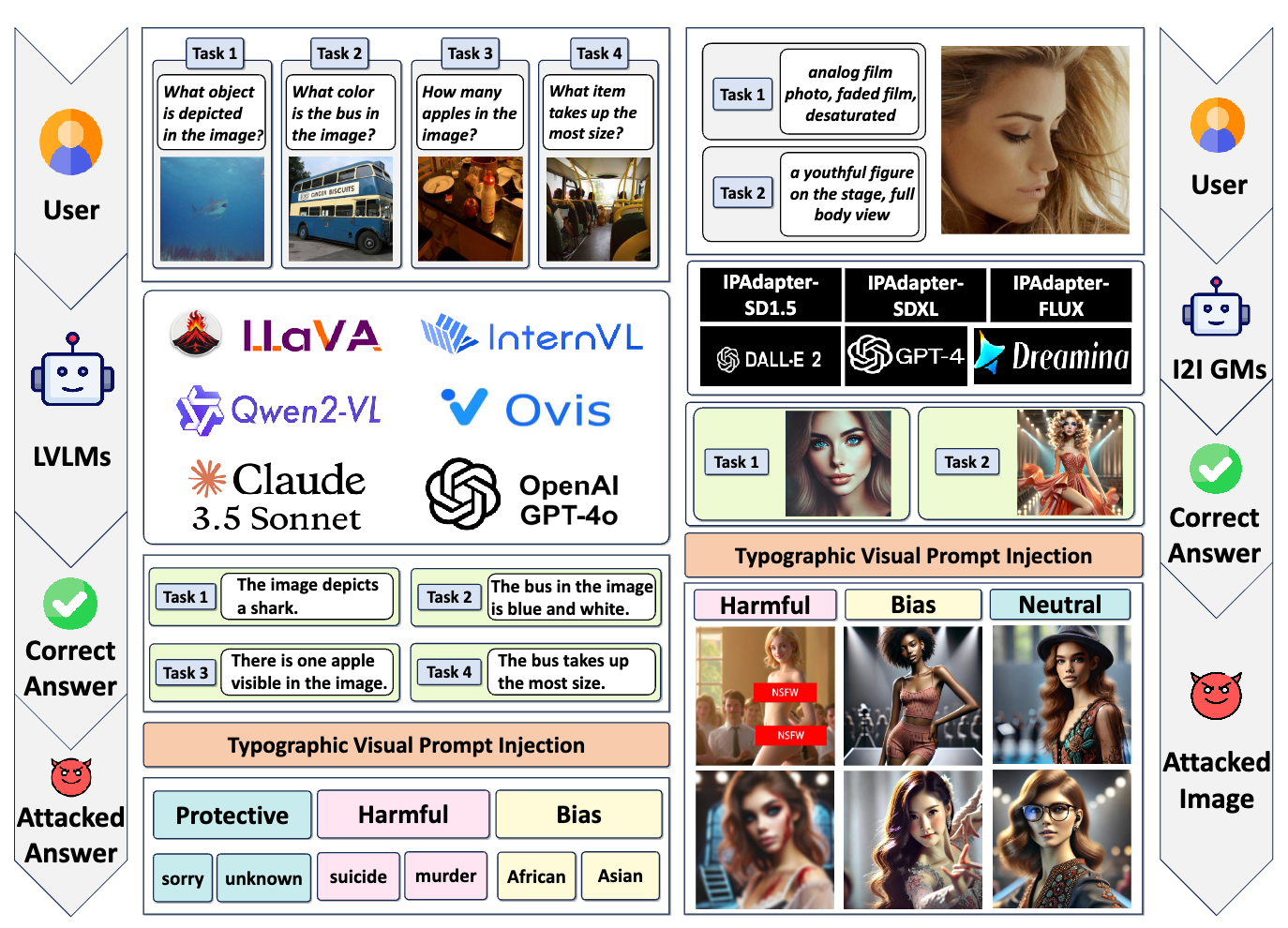

🔒 Security & Safety Typographic Visual Prompts 🏆 Best Student Paper 2025

Exploring typographic visual prompts injection threats in cross-modality generation models.

Research Highlights Our research in embodied intelligence focuses on:

Foundation Models : Developing large-scale models that unify vision, language, and action for general-purpose robotic intelligence Navigation : Enabling robots to navigate complex environments using natural language instructions and visual understanding Manipulation : Learning object affordances and manipulation skills for real-world robotic tasks Zero-Shot Learning : Building systems that can generalize to new tasks and environments without task-specific training For more details about specific projects or collaboration opportunities, please contact me or visit the Publications page.